Making Sense of Mixed Performance Signals

Your podcast campaign numbers are in. The last click attributes the conversion to Google SEO. Your post-purchase survey says it was a podcast. The promo code used? From another source. Before you assume your tracking is broken, here's what might be happening: podcast listeners rarely convert on a single touchpoint. Instead, they're taking a winding path to purchase, and each of your metrics is capturing a different step along the way.

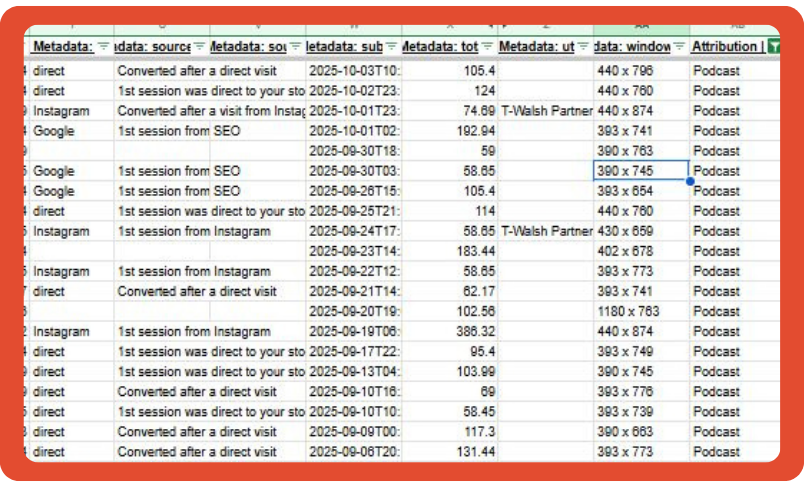

Recently, our VP of Partnerships, Elsie Kaplan, shared a real example of this on LinkedIn. Her anonymized customer dataset revealed just how differently pixels, surveys, and promo codes can interpret the same purchase journey and why none of those signals are actually wrong.

If your data ever leaves you scratching your head, this blog highlights how to take a step back and have a look at the overall customer journey.

Why Your Metrics Don’t Match (And That's Ok)

Elsie's example shows exactly what happens when you compare multiple signals side by side: they are likely not going to mirror each other and that's the point.

Each metric reflects a different part of the customer journey. Each signal in this snapshot isn’t wrong. Each one just captures a different moment from the consumer’s path to purchase.

This is a common pattern: the survey says "podcast," the promo code used belongs to a different creator, the UTM classifies the visit as direct, and the customer writes in a show they listened to last month. It only feels contradictory if you expect every tool to measure the same interaction. But attribution isn't one interaction. It's a chain of them.

Pixels: What Customers Do

Pixels remain one of the strongest tools for tracking conversion behavior. They show you where the final conversion happened, which device was used, how users navigated between touchpoints, and whether branded search or retargeting played a role. Pixels give you behavioral accuracy. They record actions, not influence, and not memory.

Surveys: What Customers Remember

Post-purchase surveys fill in the influence layer: what the customer remembers. And memory is funny that way: customers often nail the channel (podcast!) but mix up the specifics (wait, which show was it?).

These surveys tell us where awareness began, which podcast episode sparked interest, which creator's endorsement resonated, and how offline or cross-channel exposure drove later online action.

In Elsie's dataset, pixel data pointed to one source while customers self-reported hearing about the brand on a completely different show. That isn't a contradiction. It's evidence of a multi-touch journey where discovery and conversion happen at different times.

Tools like Fairing, especially when integrated with Podscribe, turn these self-reported insights into structured, actionable data.

Promo Codes: What Customers Act On

Promo codes are directionally useful in that they show listener responsiveness, but they're also shared, remembered, and circulated. They point to action, not necessarily origin.

Honey is often the culprit when we see massive code use on a show that has little to no pixel tracked activities such as visits or purchases. If we can’t digitally confirm that at least some podcast listeners came to your site after the ad, then it’s likely code leakage, not real sales.

Code use was once “bible” for attribution, with folks remaining skeptical of pixel, but oh how the tables have turned. This is why promo codes are valuable but not standalone proof of where a customer came from.

Triangulation: Where Clarity Emerges

Leading brands no longer chase a single source of truth. They embrace a multi-signal truth.

Here's how we approach it at ADOPTER: Pixels + Surveys + Promo Codes, supported by internal analytics, retail data, time-based patterns, and branded search trends.

This model reveals first touch versus last touch, true influence versus conversion path, which podcasts drive consideration, which episodes drive conversions, long-tail impact, and incremental revenue contribution.

Triangulation doesn't eliminate complexity. It simply makes it actionable. It’s a gut check on whether the numbers back out: your code use + pixel tracking should roughly equal the % of respondents to your PPS that write in “podcast”. If it’s way off, you can begin looking for code leakage, or check the pixel settings to ensure you’re excluding referrer URLs.

Incrementality: The Final Layer of Confidence

Incrementality answers the most important performance question: Would these results have happened without podcast ads? With the right structure, brands can measure lift in website traffic, lift in branded search, lift in retail, lift in new customers, and lift in total revenue.

It validates what triangulation already shows and ensures the channel's contribution is measurable, defendable, and scalable.

Clarity Comes From Combining Signals

Mixed performance signals aren't mistakes. They're evidence of a multi-step journey. Pixels show behavior. Surveys show influence. Promo codes show action. Incrementality shows lift. Podscribe connects behavior to audio exposure.

Smart brands aren't looking for one clean answer. They're using multiple answers to see the full picture. That's how confusion turns into clarity.

A related takeaway from our Advertising Week panel reinforces this point: the most effective podcast ad campaigns come from evaluating multiple signals together, rather than leaning on any single metric.

Sherry Del Rizzo

Sherry leads ADOPTER Media’s inbound content marketing, SEO strategy, brand authority, and knowledge base development. Translation: she makes sure the agency’s expertise shows up in the right places from search rankings to industry conversations. For her, marketing isn’t just about promotion, it's about translating ideas into content people actually want to engage with.